Designing Backpressure in a Parallel DAG Executor

TL;DR — My parallel DAG scheduler could over-dispatch tasks.

The fix required bounded queues, a global in-flight cap, and careful avoidance of deadlocks.

Disclosure: This post was written by me. I used an LLM for editorial feedback on phrasing and structure. The technical content, code, experiments, and conclusions are my own.

When I first implemented parallel execution for my DAG executor, I used unbounded channels between the scheduler and worker threads.

The initial version worked functionally, but it had an important limitation: nothing prevented the scheduler from enqueueing an arbitrary number of ready tasks.

Under higher load, this can lead to unbounded queue growth and memory usage.

The fix was to introduce explicit backpressure rather than add an async runtime.

Contents

- The Naive Parallel Design

- The Hidden Problem: Over-Dispatch

- Introducing Backpressure

- Physical Cap vs Logical Cap

- Why the Result Channel Remains Unbounded

- Testing the Concurrency Bound

- Final Thoughts

1. The Naive Parallel Design

My DAG scheduler executes tasks with dependencies, so execution follows a Kahn-style topological progression: whenever a node’s in-degree becomes zero, it becomes ready and can be dispatched.

The design consists of:

- A single scheduler thread.

- N worker threads.

- One task channel per worker (scheduler → worker).

- One shared results channel (workers → scheduler).

Each worker owns its own queue. The scheduler distributes tasks round-robin across workers. Workers execute tasks and push results back to the scheduler via a shared mpsc channel.

In a first implementation, all task channels could be created using std::sync::mpsc::channel(), which is unbounded.

Architecturally, this is a classic producer–consumer pattern with a dispatcher:

- The scheduler produces tasks.

- Workers consume tasks.

- Workers produce results.

- The scheduler consumes results.

An alternative design would be a single shared task queue consumed by all workers. However, std::sync::mpsc does not allow cloning the receiver. Wrapping a single receiver inside Arc<Mutex<_>> would serialize recv() calls, introducing contention and eliminating true parallel dequeue. Independent per-worker queues avoid this bottleneck and reduce lock contention.

2. The Hidden Problem: Over-Dispatch

The initial implementation is functionally correct, but structurally it allows over-dispatch.

Because task channels are unbounded, the scheduler can enqueue tasks as fast as it discovers them ready.

In a wide DAG, where many nodes become ready simultaneously, this means:

- The scheduler can enqueue thousands of tasks.

- Worker queues grow without limit.

- Memory usage scales with graph width.

- Execution latency increases due to queue growth and cache pressure.

Nothing prevents the scheduler from outpacing the workers.

This is a standard producer/consumer failure mode:

If producers are faster than consumers and the buffer is unbounded, queue growth shifts the problem into memory usage.

This usually does not show up in small tests, but it becomes risky once the workload grows.

The design runs work in parallel, but it still lacks flow control.

3. Introducing Backpressure

To prevent unbounded growth, I introduced bounded per-worker queues using std::sync::mpsc::sync_channel.

Unlike channel(), which is unbounded, sync_channel(cap) enforces a fixed buffer size. When full, send() blocks or try_send() returns TrySendError::Full.

The scheduler now dispatches tasks using try_send() instead of send().

This allows the scheduler to:

- Detect when a worker queue is full.

- Avoid blocking blindly.

- Retry later after receiving a completed result.

The dispatch result is mapped to:

enum DispatchOutcome<O, E> {

Queued,

AllFull(Task<O, E>),

}If all worker queues are full, the scheduler stores the task in a pending slot and switches to receiving results.

The scheduler loop now alternates between two phases:

- Attempt dispatch while below capacity.

- If saturated, wait for completion and retry.

In addition to bounded per-worker queues, I introduced a global max_in_flight counter.

This ensures that the total number of running + queued tasks never exceeds a configurable system-wide cap.

4. Physical Cap vs Logical Cap

Two independent limits now exist in the system:

- Per-worker queue capacity.

- Global in-flight capacity.

Each worker can hold:

- 1 running task.

worker_queue_capbuffered tasks.

Therefore:

physical_cap = n_workers * (queue_cap + 1)This is the structural maximum number of tasks the system can physically hold.

The scheduler also enforces:

effective_cap = min(max_in_flight, physical_cap)The global max_in_flight defines a logical execution limit.

The physical cap defines a hard architectural limit imposed by channel capacity:

- Physical cap prevents memory explosion.

- Logical cap controls parallel pressure and resource usage.

Together, these two limits keep task admission explicit and memory usage bounded.

5. Why the Results Channel Remains Unbounded

Task channels are bounded.

The results channel is not.

If the results channel were bounded, a circular wait could occur:

- The scheduler stops dispatching because worker queues are full.

- Workers complete tasks and attempt to send results.

- The results channel is full.

- Workers block on sending results.

- The scheduler is waiting to receive results, but cannot progress.

This creates a deadlock cycle.

By keeping the results channel unbounded, completed tasks can always report back to the scheduler, ensuring forward progress.

6. Testing the Concurrency Bound

To prevent regressions, I introduced an integration test that measures maximum concurrent execution using AtomicUsize.

Instead of using sleep(), which is nondeterministic, tasks block on a shared AtomicBool gate to force overlap.

The test tracks:

- Active tasks.

- Maximum observed concurrency.

It asserts:

max_active <= max_in_flightThis lets the test measure the invariant directly at runtime.

Final Thoughts

Backpressure is common in async and reactive systems, but the same idea applies here as well:

If producers can outpace consumers and buffers are unbounded, memory becomes the failure mode.

In this scheduler, explicit bounds make queue growth and task admission easier to reason about.

# related

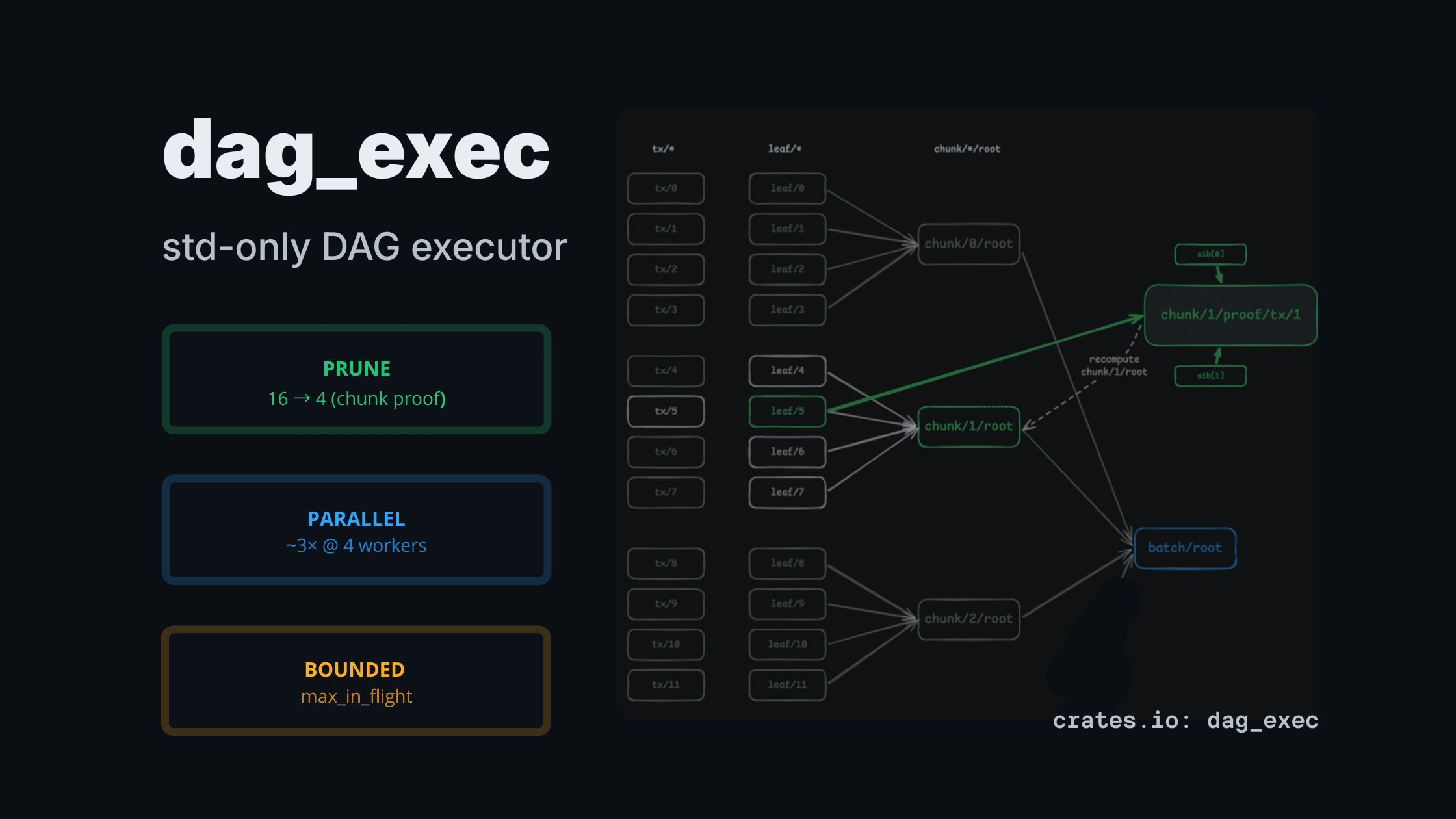

dag_exec: a std-only DAG executor for CPU-heavy pipelines (pruning + bounded parallelism)

A tiny std-only DAG executor that computes only the requested outputs (partial evaluation) and runs heavy nodes in parallel with explicit bounds.

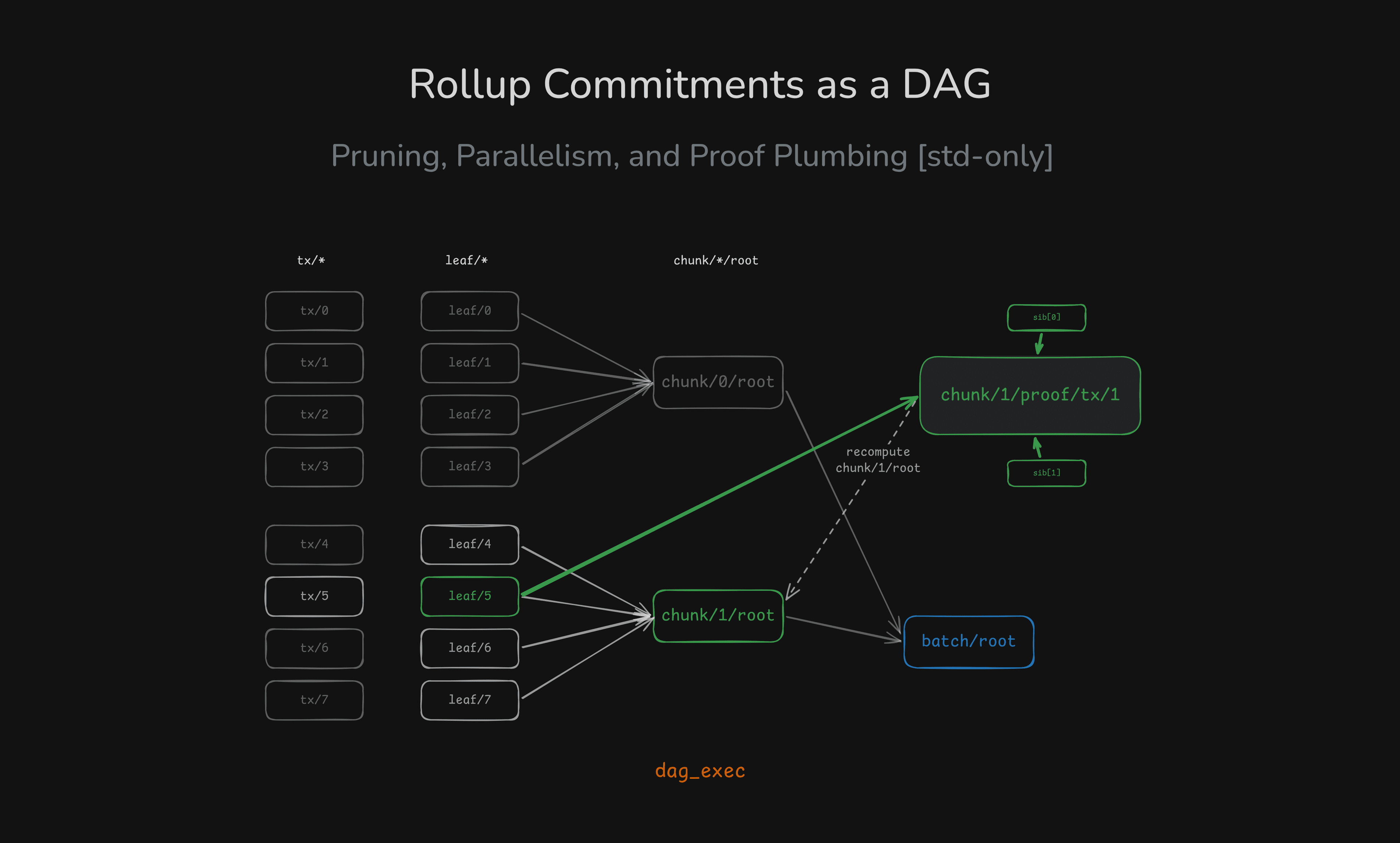

Rollup Commitments as a DAG: Pruning, Parallelism, and Proof Plumbing (Rust std-only)

A rollup-shaped batch pipeline modeled as a DAG: compute only the chunk/proof you need, and scale CPU-heavy hashing with bounded parallelism — all in std.

Testing Concurrency Invariants in a Parallel Executor

How to verify max_in_flight bounds using AtomicUsize, CAS loops, and deterministic gating without sleep().